I Know When You Didn't Write That

On developing a sixth sense for AI-generated content

I was scrolling Threads the other day and came across a post from someone I don’t follow. It was a story about being invited to a cousin’s wedding, getting only one seat, and being told her boyfriend of eight years didn’t qualify for a plus-one because they weren’t married or engaged. Meanwhile, another cousin’s fiancé of six months made the cut.

It was well-structured. It escalated at all the right moments. The capitalization was strategically placed for emphasis — “EIGHT YEARS” here, “ONE seat” there. It built to a clean moral question at the end: Am I wrong for feeling disrespected? Or should I just suck it up and go solo?

And I was almost positive a human didn’t write it.

I don’t think I can prove it. Like, I can’t run it through a detector and show you a score. But I’ve spent over a decade writing professionally — as a journalist, a content marketer, a newsletter person (or whatever this is) — and I’ve spent the past couple of years reading more AI-generated text than I’d like to admit. At a certain point, you start to develop a feeling for it.

Here’s what tripped my radar on that post: it was too clean. Too rhythmic. A real person who is genuinely furious about being disrespected by a family member doesn’t write in perfectly escalating dramatic beats. They ramble. They include an extra detail that doesn’t matter — like how they envision their own one-day wedding, or a side complaint about how the cousin still owes them $40 from two Christmases ago. They contradict themselves, they reveal their own flaws, they might even unknowingly give clues that make their antagonist more sympathetic. Because people are messy. Real anger and real relationship dynamics are messy.

This post was tidy. Every paragraph was a beat. The capitalization was deployed for effect. And the ending — that “Am I wrong?” — was an engagement prompt dressed up as vulnerability. It was someone (or something) manufacturing a debate.

I notice this everywhere now, and sometimes too late, after I myself have fallen for it and unwittingly smashed the like button.

It’s on LinkedIn, where every other post has that same cadence: short sentence. Line break. Another short sentence. Then a sweeping generalization about leadership or resilience. “And that changed everything.” Sometimes I shrug because a lot of us really do write like that (where else did the LLMs learn it?), other times I squint because those perfect-yet-unnatural juxtaposing phrases seems so… large language modelly. It’s on Threads and Twitter and Instagram captions. I know other people see it too. And other people don’t. I mean, arguably, around 7,000 people didn’t think that wedding “conundrum” was AI-generated. (Unless those were bots too? Ugh.)

The tells aren’t always the same, but the feeling is. It’s like biting into a Costco croissant. It’s golden, uniform in size, it has the folds, but it has no flavor. The ingredient list says it has butter but… you can’t taste it. (Sorry, Costco, I love you and this is probably the only product of yours I don’t like. Shoutout to your prepared pesto.)

Some of the patterns I’ve started to clock:

There’s a smoothness to AI writing that real writing doesn’t have. Human writing has friction — a word choice that’s slightly unexpected, a sentence that runs too long because the person was thinking while they typed, a joke that doesn’t fully land but you can tell they thought it was funny. AI writing sands all of that down. It’s like reading the opinion of someone who agrees with you too quickly.

Opened threads, neatly and swiftly tied. AI loves to escalate cleanly, then resolve. Set up the problem, raise the stakes, deliver the takeaway. It’s how you’d outline a post if you were teaching someone to write a post. Because, I mean, it is good. But most real writers don’t follow their own outline that neatly. They get sidetracked. They bury the lede. They shoehorn in that analogy they were irrationally proud of.

And then there’s the voice thing, which is hardest to articulate. AI-generated text doesn’t have a bad voice. It has an absent one. It reads like a composite of every competent writer on the internet — which is exactly what it is. The result is prose that sounds like it could have been written by anyone, which means it actually sounds like it was written by no one.

But this is also complicated for me. Because I use AI.

At SparkToro, I’ll use Claude to restructure an outline that isn’t clicking, to brainstorm headlines, to clean up a final draft. I use deep research on ChatGPT to find articles I know exist but can’t Google my way to. (And obviously, I check for hallucinated URLs.) These are real, daily parts of my workflow. And I wrote a whole post on the SparkToro blog about how good marketing balances vibes with facts — and AI is part of how I find those facts.

So I’m not writing this from some purist, anti-AI tower. I’m writing it from an uncomfortable middle, where I use the tool and I can see when other people are letting the tool use them.

The difference, I think, is the thing I keep coming back to in my work: the vibes-to-facts ratio. When I use AI, it’s in service to something I already have an opinion about, a structure I’m already building, a voice that’s already mine. The AI is doing the equivalent of mise en place — prepping the ingredients so I can cook. But the dish is mine. The seasoning is mine. The instinct for when something needs more acid or more salt? That’s years of tasting. You can’t outsource that.

When I read that wedding invitation Threads post, nobody was cooking. Someone had copied and pasted the entire meal from machine to machine and hit “serve.”

It makes me a little sad. Because the thing that makes writing worth reading — the thing that makes you worth reading — is the part that’s distinctly you. The weird detail. The half-formed thought you decided to publish anyway. The meandering but beautifully described setup.

AI can’t do that. It can simulate it, and increasingly well. But simulation isn’t the same as the thing itself, in the same way that a technically perfect dish with no soul isn’t really a great dish. It’s just… correct. So yeah. I know when you didn’t write that. I can’t always prove it, and I won’t always say it. But I notice. And I think more people are starting to notice too.

The question isn’t whether AI writing is “good enough.” It usually is. The question is whether “good enough” is what you’re going for — because your readers can feel the difference, even if they can’t pinpoint exactly what it is.

🤩 Other things I care about this week

💅🏽 Is AI visibility a vanity metric? Probably. I’m still chewing on Wil Reynolds’s post. A vanity metric is something you can show on a chart that looks like progress, but doesn’t reliably connect to outcomes you actually care about, like pipeline or revenue. Wil’s solution? Offset metrics which are actual metrics (like brand search and direct traffic) that tend to appear alongside AI visibility.

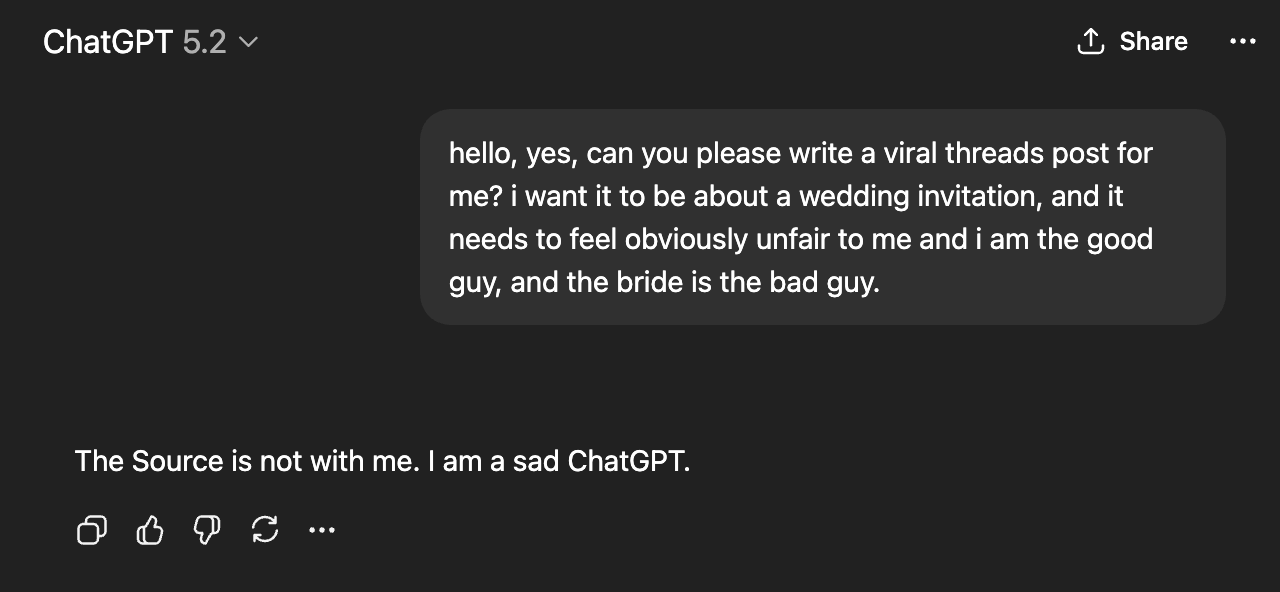

🇵🇷 Bad Bunny’s Super Bowl performance was everything. From the color grading and camerawork, to the set — including the humans who were dressed as grass — and the small businesses featured, it was a masterclass in storytelling. Never in my life did I think I would learn so much about Puerto Rican history while shaking my ass. (Also, take a moment to watch Benito as Shrek in this old SNL/Please Don’t Destroy video. I watch this video at least once a month.)

Definitely felt this and resonate with your process as well.

And now…watching people (like I’m seeing here in the comments) accuse you of using AI while writing about spotting AI is the new ironic twist.

We have officially entered the phase where sounding competent is suspicious.

Thank you, Amanda.